What Size Battery Do I Need for Solar

A battery sized for keeping the refrigerator alive during a six-hour outage looks nothing like a battery sized for running air conditioning through a three-day grid failure. The number of kilowatt-hours needed flows directly from what those kilowatt-hours need to accomplish.

The solar industry loves complexity because complexity sells bigger systems. Installers quote whole-home backup packages to customers who really just want their internet router to stay online during thunderstorms. Online calculators ask for square footage, appliance counts, and regional climate data to produce recommendations that could have been reached in thirty seconds with one honest question: what exactly do you need this battery to do?

The Refrigerator Test

Start with the simplest possible case.

A refrigerator draws about 150 watts averaged across its cycling. Over 24 hours, that totals around 3.5 kWh. Add LED lighting, a WiFi router, phone charging. The daily total for true essentials lands somewhere around 5 kWh. A single Tesla Powerwall 3 at 13.5 kWh handles this load for more than two days without any solar input whatsoever. During sunny weather with panels actively charging, that same battery could maintain essential loads indefinitely.

This is the calculation that matters for probably 60 percent of battery buyers. They want food to stay cold and phones to stay charged during routine outages. They do not need multiple batteries. They do not need 40 kWh. One reasonably sized unit does the job with capacity to spare.

The industry hates this math because it leads to smaller sales. So the conversation gets steered toward whole-home backup, toward multi-day autonomy, toward scenarios involving simultaneous operation of every appliance in the house. These scenarios make for compelling sales presentations. They rarely reflect how people actually behave during power outages.

When the grid goes down, behavior changes. The clothes dryer sits idle. Air conditioning setpoints drift upward or the system gets switched off entirely. People dig out candles and flashlights instead of turning on every light in the house. Actual outage consumption runs maybe half of normal consumption for most households, sometimes less.

Sizing for normal consumption when outage consumption is the relevant number leads to oversized systems. Oversized systems cost more upfront, take longer to pay back, and the excess capacity still degrades whether it gets used or not. Lithium batteries age on the calendar regardless of cycling. Money spent on capacity that never gets touched is money wasted.

Air Conditioning Destroys the Math

Everything changes the moment somebody decides air conditioning must run during outages.

Central AC systems pull 3 to 5 kilowatts while operating. During a summer heat wave, the compressor might run eight or ten hours daily. That single appliance consumes 30 to 40 kWh per day, roughly ten times the essential-loads baseline. The refrigerator that seemed like the critical load suddenly becomes a rounding error.

Four Powerwall units totaling 54 kWh provide maybe one and a half days of aggressive AC use before depletion. Physics does not negotiate. Buyers in Phoenix or Houston who expect whole-home comfort during extended summer outages need either battery capacity that costs more than a decent used car, or a generator, or an honest conversation with themselves about thermal tolerance.

The battery industry prefers not to facilitate that honest conversation because it complicates the sale. Easier to quote a big system and let the customer discover the limitations later.

The realistic middle ground involves running AC intermittently during outages. Cooling the house aggressively before an anticipated storm. Keeping temperatures bearable rather than comfortable. Setting the thermostat at 82 instead of 74 and accepting some discomfort. A 20 to 30 kWh system can maintain survivable conditions through multi-day outages with this approach.

Bearable and comfortable are not the same thing. Marketing materials never make that clear.

The glossy brochure shows a family lounging in perfect climate-controlled serenity while the grid burns down around them. Reality involves sweat and compromise.

There is also the startup surge problem, which catches people off guard. An AC system rated at 4 kW continuous might pull 12 kW or more for the two or three seconds needed to spin up the compressor. A battery with plenty of stored energy but insufficient power output will try to start that compressor, fail, trip its protection circuits, and leave the house hot despite showing 80 percent charge remaining on the app. The homeowner stares at the battery, stares at the dead AC, and wonders what went wrong.

What went wrong is that storage capacity and power output are different specifications serving different purposes, and the sales process emphasized one while glossing over the other.

LFP Won

Five years ago, choosing between lithium iron phosphate and nickel manganese cobalt chemistry involved real tradeoffs. LFP offered longer cycle life and better safety margins. NMC offered higher energy density and lighter weight. Reasonable people could disagree about which tradeoffs mattered more for home installations.

That debate has resolved. LFP won so completely that continuing to discuss it feels like explaining why VHS beat Betamax.

Tesla switched the Powerwall line to LFP. Enphase uses LFP exclusively. FranklinWH never used anything else. The manufacturers who still ship NMC are primarily working through existing production investments rather than making affirmative choices about optimal chemistry. When engineers start fresh today, they choose LFP.

The reasons stack up. LFP cells survive more charge cycles before degradation becomes noticeable. We are talking 4,000 to 6,000 cycles versus maybe 1,500 for NMC in comparable conditions. For a battery cycling daily, that difference translates to a decade of additional service life. LFP thermal runaway requires temperatures that residential installations essentially never reach. The chemistry is stable in ways that NMC chemistry is not. LFP contains no cobalt, which eliminates both supply chain volatility and the ethical complications of Congolese mining operations. LFP costs less per kilowatt-hour and the gap keeps widening as manufacturing scales.

The sole disadvantage is energy density. LFP packs less storage into the same physical volume. For electric vehicles, where every kilogram of battery weight reduces driving range and cargo capacity, this disadvantage matters genuinely. For a box bolted to a garage wall, where the difference between a unit 24 inches wide and one 28 inches wide has zero practical consequence, energy density is irrelevant.

Buyers still encounter NMC recommendations occasionally. Generac uses NMC in some PWRcell configurations. Old LG Chem inventory still circulates through installer networks. When this happens, ask why NMC over LFP. Listen carefully to the answer. If it involves anything other than genuine physical space constraints that preclude a larger enclosure, something else is driving the recommendation. Maybe old inventory that needs to move. Maybe installer familiarity with a particular product line. Maybe commission structures that favor certain brands. Rarely the customer's actual interest.

Lead-acid batteries persist in price-focused marketing aimed at buyers who look at upfront cost and nothing else. The sticker price looks attractive at roughly half the cost per kilowatt-hour. That attraction evaporates under any scrutiny.

Lead-acid usable capacity runs about half of rated capacity because discharging below 50 percent accelerates degradation dramatically. That 10 kWh lead-acid bank delivers 5 kWh. Efficiency losses during charge and discharge cycles run higher than lithium. Cycle life runs one-third to one-fifth of LFP. Flooded lead-acid requires periodic maintenance, checking water levels, equalizing charges, cleaning terminals. The batteries weigh vastly more and occupy vastly more space.

Add it all up and lead-acid costs more per delivered kilowatt-hour over the system lifetime despite costing less at purchase. The only scenario where lead-acid makes sense is a seasonal cabin that might cycle twenty or thirty times per year and sit idle the rest of the time. For daily-cycling solar applications, lead-acid lost the argument years ago.

Quoted Capacity Versus Reality

Marketing quotes nominal capacity because larger numbers sell better.

LFP batteries tolerate discharge to roughly 80 to 90 percent of rated capacity without accelerated degradation. A battery marketed as 13.5 kWh delivers 11 to 12 kWh in practice. This gap is not deception exactly. It reflects real engineering decisions about longevity versus utilization. Pushing discharge deeper shortens cycle life. Manufacturers set limits that balance these concerns.

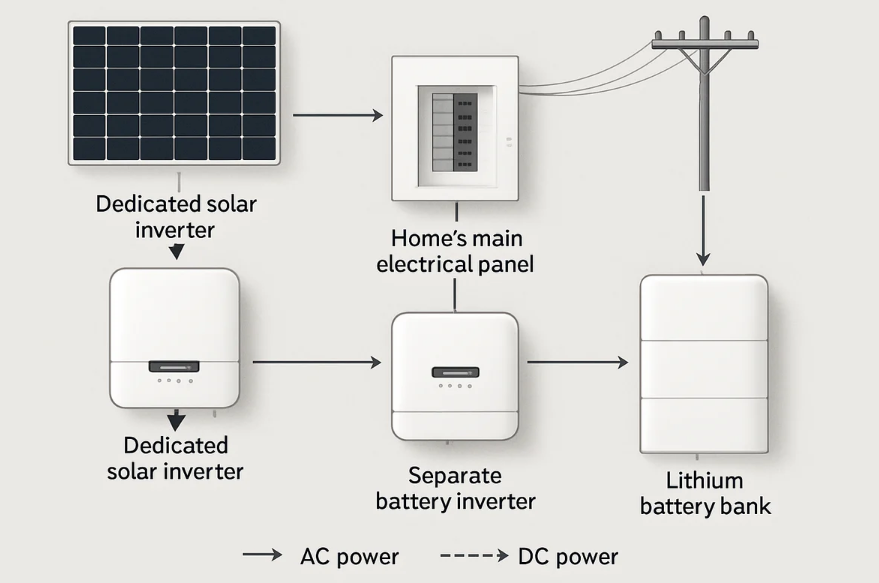

System losses take another slice. Energy entering a battery passes through conversion electronics. Energy leaving passes through again. Each conversion generates heat, and heat represents lost energy. DC-coupled systems, where battery and solar share integrated inverter electronics, lose less because energy converts fewer times between generation and storage. AC-coupled retrofits, where a battery with its own inverter connects to an existing solar system with its own separate inverter, convert energy more times and lose more at each step.

The spread between a well-designed DC-coupled installation and a mediocre AC-coupled retrofit can reach 10 percentage points of round-trip efficiency. That means 10 percent more energy purchased from the utility or 10 percent less backup duration from the same nominal capacity. Over ten years of daily cycling, efficiency differences compound into real money. Hundreds of dollars annually in some rate structures.

This is why comparing batteries purely by nameplate capacity misleads. A 13.5 kWh battery with 96 percent efficiency delivers more usable energy than a 15 kWh battery with 88 percent efficiency despite the smaller headline number. The comparison that matters is usable capacity after accounting for depth of discharge limits and round-trip efficiency.

Temperature

Batteries perform optimally at room temperature. They frequently get installed in garages, utility rooms, and outdoor enclosures that see nothing close to room temperature.

Cold reduces available capacity temporarily. A lithium battery rated for 13.5 kWh at 70°F might deliver 11 kWh at 40°F and 9 kWh at 20°F. The capacity returns when temperatures rise. This matters for winter outages in cold climates, where both heating loads increase and battery capability decreases at exactly the same time.

Charging below freezing is the real problem. Cold charging causes lithium metal to plate onto cell anodes instead of intercalating into the graphite structure the way it should. This plating is permanent. It cannot be reversed. Each cold-charging event reduces capacity forever. Battery management systems on modern equipment refuse to accept charge below safe temperatures, which protects the cells. The protection has consequences though. Solar panels generating power on a cold winter morning may have nowhere to send it. The battery sits there, refusing to charge, while perfectly good solar production goes to waste or flows to the grid at whatever pittance the utility offers for exports.

Installations in cold climates either locate batteries in heated spaces, add heating elements to battery enclosures, or accept reduced winter performance and wasted winter production. Each approach has costs. Heated indoor installation means running conduit and finding interior wall space. Heating elements consume energy and add complexity. Accepting reduced performance means sizing larger to compensate for winter derating. None of these solutions is free.

Heat accelerates degradation in a different way. Cold effects are temporary and reversible except for the charging damage. Heat effects are permanent and cumulative. A battery installed in an Arizona garage where summer temperatures routinely exceed 110°F ages faster than an identical battery in a Seattle basement. There is no recovery period when autumn arrives. The accelerated aging that happened during summer persists forever.

Warranties contain temperature specifications that real-world installations frequently violate. Whether manufacturers actually deny claims based on documented operation outside specified ranges varies by company and probably by the mood of whoever reviews the claim. The safe approach involves either locating batteries in climate-controlled spaces or accepting that effective warranty duration may prove shorter than the paperwork suggests.

Grid-Tied Versus Off-Grid

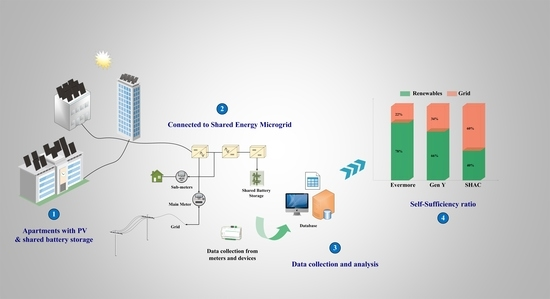

Grid-tied systems with decent net metering treat the utility as an infinitely large, perfectly efficient, always-available battery. Export excess solar production when the sun shines, import it back when the sun sets. The grid handles storage. Physical batteries in this configuration exist for outage backup and maybe for time-of-use arbitrage where rate structures make that worthwhile. Capacity requirements stay modest because the heavy lifting happens elsewhere.

This math worked beautifully for years. Then the utilities started changing the deal.

California's NEM 3.0 landed like a bomb on the residential solar industry. Solar exports that once credited at full retail rates, 30 to 40 cents per kilowatt-hour in some territories, suddenly credited at near-wholesale rates, 4 to 8 cents. The economics of grid-tied solar without storage collapsed overnight. Sending excess production to the grid and buying it back in the evening went from reasonable strategy to financial self-harm. Batteries that had been optional backup devices became essential system components.

Where a grid-tied homeowner in a state with good net metering might reasonably install solar without any battery, a Californian with the same consumption patterns now needs 15 to 20 kWh of storage just to make the system economics work. The battery captures midday production that would otherwise export at terrible rates and shifts it to evening hours when grid electricity costs four or five times as much. Without that shift, the solar investment barely pencils out.

Other states watch California and adjust their own policies accordingly. The trend runs clearly toward reduced net metering compensation and increased time-of-use rate complexity everywhere. Batteries that make marginal sense today will likely make stronger sense in 2030.

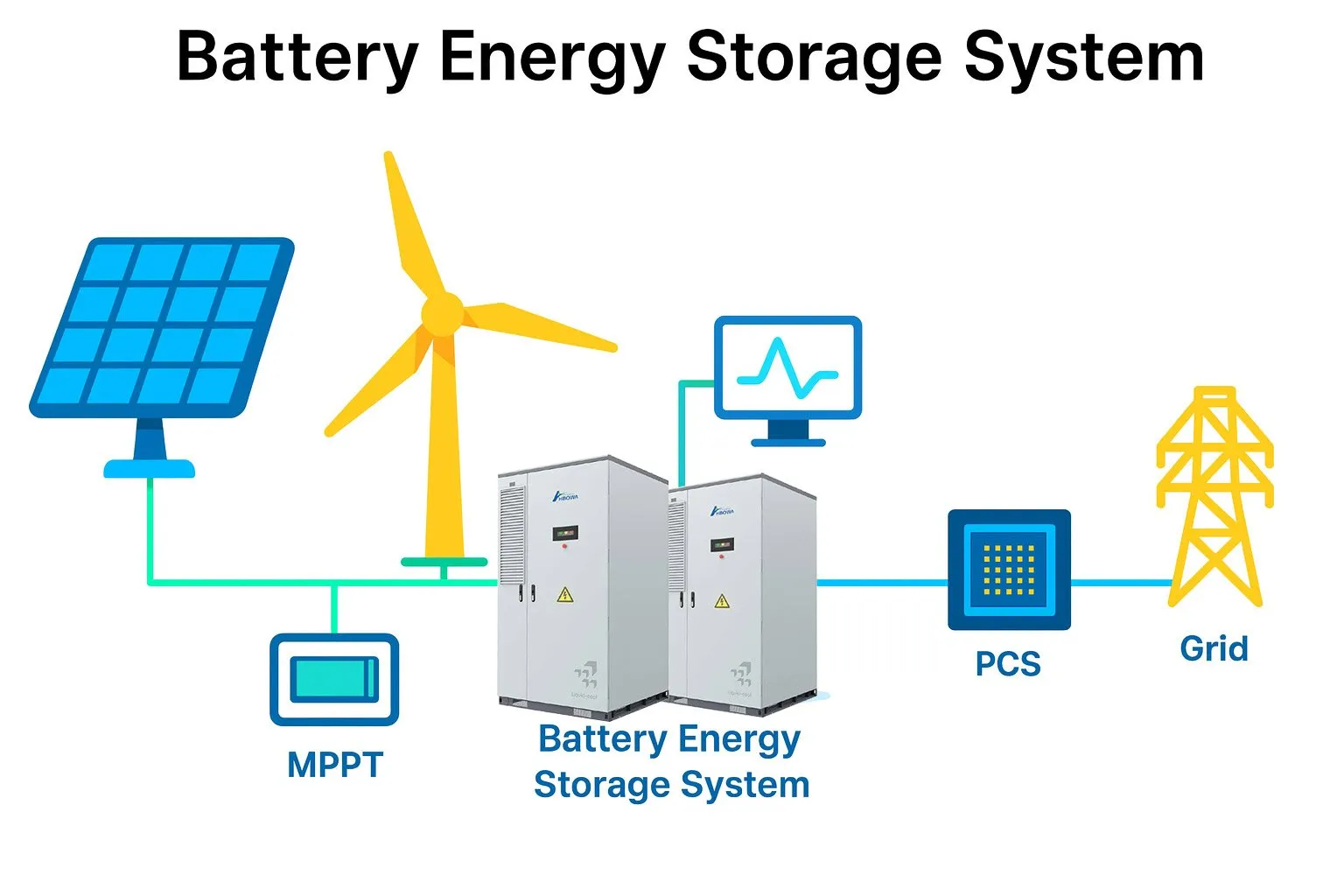

Off-grid systems face the most demanding sizing requirements because no utility backup exists at all. Extended cloudy weather means no recharging. The battery bank must carry loads through multiple days of minimal solar production. Where a grid-tied Californian might need 15 kWh for economic optimization, an off-grid cabin in the Pacific Northwest with similar consumption patterns needs 35 or 40 kWh to survive a week of November clouds without running a generator.

The relationship between battery capacity and solar array size also changes for off-grid. Grid-tied systems can oversize arrays relative to batteries because excess production simply exports. Wasteful maybe, given poor export rates, but not catastrophic. Off-grid systems waste any production that exceeds what batteries can absorb and loads can consume. Oversized arrays paired with undersized batteries generate power that has nowhere to go. Undersized arrays paired with oversized batteries never fully charge the bank. The balance matters more when there is no grid to absorb mistakes.

Commercial Is Different

Residential batteries exist for backup power and rate arbitrage. Commercial batteries exist primarily to reduce demand charges.

Demand charges work differently from the energy charges that dominate residential bills. Energy charges bill for total consumption. Demand charges bill for peak consumption rate, measured in 15 or 30-minute intervals depending on the utility. The single highest interval during a billing cycle, just one 15-minute period of heavy draw, sets the demand charge for the entire month.

A small factory that averages 200 kW consumption but spikes to 500 kW for fifteen minutes when a big motor starts pays demand charges based on that 500 kW spike. At $15 per kW of demand, that fifteen-minute spike costs $7,500 monthly, $90,000 annually. The equipment that caused the spike might only run a few times per day. The demand charge happens regardless.

Commercial battery systems shave these peaks. Monitoring systems track real-time facility load. When consumption approaches the previous month's demand peak, batteries discharge to reduce grid draw. The facility still consumes the same energy. The peak grid draw drops. Demand charges drop proportionally.

Sizing commercial systems requires analyzing twelve to twenty-four months of interval load data to identify peak patterns. A facility with 400 kW peaks lasting 20 minutes needs much less battery capacity than a facility with 400 kW peaks lasting two hours. Peak magnitude matters. Peak duration matters. Peak frequency matters. How much reduction the facility targets matters.

The math depends entirely on specific load profiles that vary wildly across industries and even across facilities within the same industry. A cold storage warehouse with constant refrigeration loads has different peaks than a machine shop with intermittent heavy equipment use. Generic sizing rules do not transfer. Each commercial installation requires custom analysis.

RVs

The 12-volt world operates on different vocabulary entirely. Amp-hours instead of kilowatt-hours. Deep-cycle marine batteries and lithium drop-in replacements and complicated debates about alternator charging and solar regulators.

Weekend RV use with basic 12-volt loads consumes roughly 80 to 100 amp-hours daily. A 12-volt compressor refrigerator draws maybe 4 to 5 amps while running, cycling on and off throughout the day. LED lights draw almost nothing. The water pump draws a couple amps when it runs, which is not often. USB charging for phones and tablets barely registers.

A 200 amp-hour lithium battery provides roughly two days of this consumption with appropriate depth-of-discharge margin. Paired with 300 to 400 watts of roof-mounted solar, such a system recharges during daytime parking and runs indefinitely in sunny weather. This is the sweet spot for weekend and vacation use. Enough capacity for a couple cloudy days without solar input. Not so much capacity that the system costs a fortune or weighs down the vehicle.

Extended boondocking or full-time RV living with residential-style appliances pushes beyond what 12-volt architecture reasonably supports. Running an air conditioner off batteries requires an inverter sized for the startup surge, a battery bank sized for the continuous draw, and solar capacity to recharge it all the next day. The numbers get large quickly. 400 to 600 amp-hours at 12 volts, or more manageably, 200 to 300 amp-hours at 24 volts, or a proper 48-volt system with a big inverter.

At that point the RV electrical system starts resembling a small off-grid cabin installation. The complexity and cost scale accordingly.

The mobile market rewards different manufacturers than the residential market. Tesla does not sell a 12-volt RV battery. Enphase does not either. Battle Born built its reputation here, offering premium quality and responsive customer service at premium prices. A 100 amp-hour Battle Born runs around $900. Renogy dominates the budget-conscious segment with acceptable quality at maybe $350 for comparable capacity. The products are not identical. Build quality differs. Warranty support differs. The question is whether those differences matter for a particular application and budget.

Smaller brands have emerged in the middle space. SOK offers strong specs at moderate prices. Epoch has developed a following. The lithium RV battery market has matured enough that multiple legitimate options exist at various price points.

The Actual Answer

The American residential market has converged on 10 to 15 kWh per unit because that range matches what typical households actually need for typical purposes.

One battery in this class handles essential loads through multi-day outages without breaking a sweat. Refrigerator, lights, internet, phone charging, maybe a few other small loads. It captures enough excess solar for meaningful time-of-use arbitrage in markets where rate differentials justify the investment. It fits in a standard garage or utility room without dominating the space. It prices within the range where payback calculations at least approach break-even over system lifetime for buyers who care about economics.

Whole-home backup with air conditioning during extended outages requires two to four batteries, 30 to 60 kWh total capacity. This serves a smaller market. Buyers in this segment usually know who they are. Large homes with large loads. Unreliable grid service that makes extended outages routine rather than exceptional. Medical equipment that cannot tolerate power interruption. Climate extremes where loss of heating or cooling creates genuine safety concerns rather than mere discomfort.

The trap involves buying capacity for scenarios that will never occur. A system sized for four days of whole-home autonomy that never experiences an outage lasting more than six hours provides no benefit over a system half its size. That excess capacity still degrades over time. Lithium batteries age on the calendar whether they cycle or not. Money spent on capacity that sits unused is money that could have gone toward something useful.

Solar salespeople have commission structures that reward larger sales. Online calculators get designed by companies that manufacture and sell batteries. Neither source has strong incentives to recommend smaller systems. The buyer has to provide that corrective force by thinking clearly about what the battery actually needs to accomplish.

What loads must run? For how long? Under what conditions? Answer those questions honestly, without the influence of marketing scenarios and worst-case fantasies, and the sizing question answers itself. The formula takes thirty seconds. The clarity takes longer but matters more.